CSC352 MapReduce/Hadoop Class Notes

Outline

- History

- Based on Functional Programming

- Infrastructure, Streaming

- Submitting a Job

- Smith Cluster

- Example 1: Java

- Example 2: Python (streaming)

- Useful Commands

History

- Introduced in 2004 [1]

- MapReduce is a patented software framework introduced by Google to support distributed computing on large data sets on clusters of computers [1]

- 2010 first conference: The First International Workshop on MapReduce and its Applications (MAPREDUCE'10). [2] Interesting tidbit: nobody from Google on planning committee. Mostley INRIA

Sibblings, and Related Projects

- Hadoop, open source. Written in Java, with a few routines in C. Wins Terabyte sorting competition in 2008 [3]

- Apache Hadoop Wins Terabyte Sort Benchmark

One of Yahoo's Hadoop clusters sorted 1 terabyte of data in 209 seconds, which beat the previous record of 297 seconds in the annual general purpose (daytona) terabyte sort benchmark. The sort benchmark, which was created in 1998 by Jim Gray, specifies the input data (10 billion 100 byte records), which must be completely sorted and written to disk. This is the first time that either a Java or an open source program has won. Yahoo is both the largest user of Hadoop with 13,000+ nodes running hundreds of thousands of jobs a month and the largest contributor, although non-Yahoo usage and contributions are increasing rapidly. - Their implementation provided also to be highly scalable, and last year placed first place in the Terabyte Sort Benchmark. In order to do so, they used 910 nodes, each with 2 cores (a total of 1820 cores), and were able to keep the data entirely in memory across the nodes. With this many machines and their open-source MapReduce implementation, they were able to sort one terabyte of data in 209 seconds, in contrast to the previous record of 297 seconds. Not one to be left behind, Google showed that they were still quite ahead, claiming they could do the same with their closed-source implementation in only 68 seconds. The only details they note are that they use 1000 nodes, but since it’s not verifiable or mentioned on the official Sort Benchmark Home Page, it may not be worthwhile to discuss it further.

- Apache Hadoop Wins Terabyte Sort Benchmark

- Phoenix [4]

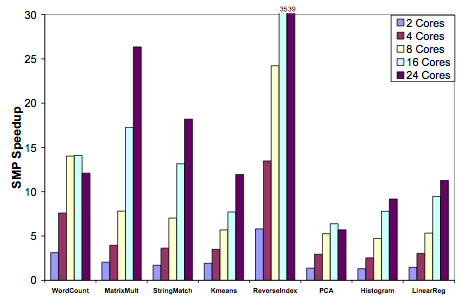

Another implementation of MapReduce, named Phoenix [2], has been created to facilitate optimal use of multi-core and multiprocessor systems. Written in C++, they sought to use the MapReduce programming paradigm to perform dense integer matrix multiplication as well as performing linear regression and principal components analysis on a matrix (amongst other applications). The following graph shows the speedup they achieve over varying number of cores:

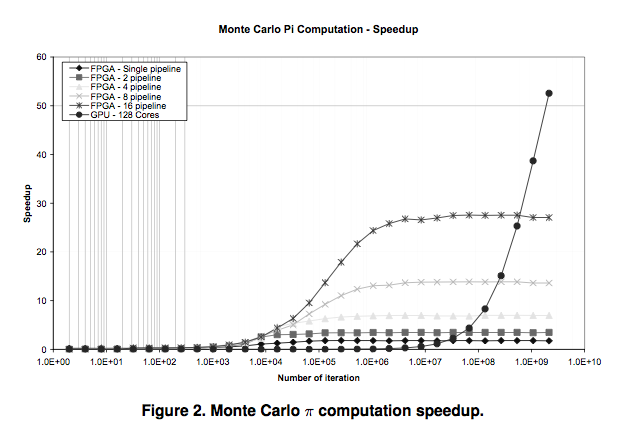

- GPU/FPGA and MapReduce [5]

References

- ↑ 1.0 1.1 Wikipedia: http://en.wikipedia.org/wiki/MapReduce

- ↑ MapReduce 2010: http://graal.ens-lyon.fr/mapreduce/

- ↑ Apache Hadoop wins Terabyte Sort Benchmark, http://developer.yahoo.net/blogs/hadoop/2008/07/apache_hadoop_wins_terabyte_sort_benchmark.html

- ↑ Evaluating MapReduce for Multi-core and Multiprocessor Systems Colby Ranger, Ramanan Raghuraman, Arun Penmetsa, Gary Bradski, Christos Kozyrakis∗ Computer Systems Laboratory, Stanford University, http://csl.stanford.edu/~christos/publications/2007.cmp_mapreduce.hpca.pdf

- ↑ Map-reduce as a Programming Model for Custom Computing Machines, Jackson H.C. Yeung, C.C. Tsang, K.H. Tsoi, Bill S.H. Kwan, Chris C.C. Cheung, Anthony P.C. Chan, Philip H.W. Leong, Dept. of Computer Science and Engineering, The Chinese University of Hong Kong, Shatin NT, Hong Kong, and Cluster Technology Limited, Hong Kong Science and Technology Park, NT, Hong Kong. http://mesl.ucsd.edu/gupta/cse291-fpga/Readings/mr_fccm08.pdf