XGrid Tutorial Part 2: Processing Wikipedia Pages

| This tutorial is intended for running distributed programs on an 8-core MacPro that is setup as an XGrid Controller at Smith College. Most of the steps presented here should work on other Apple grids, except for the specific details of login and host addresses. Another document details how to access the 88-processor XGrid in the Science Center at Smith College. This document is the second part of a tutorial on the XGrid and follows the Monte Carlo tutorial. Make sure you go through this tutorial first. |

Setup

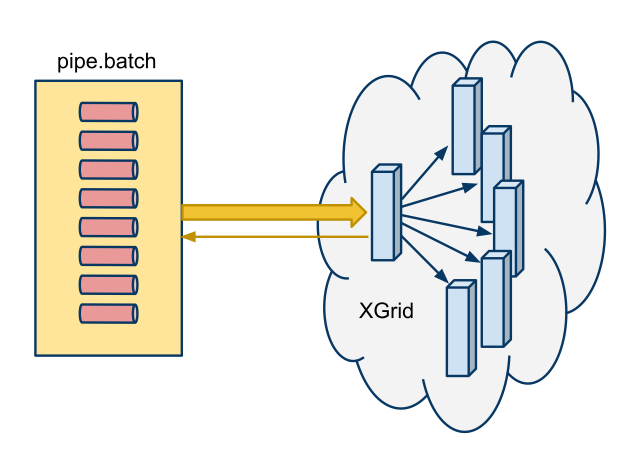

The main setup is shown below

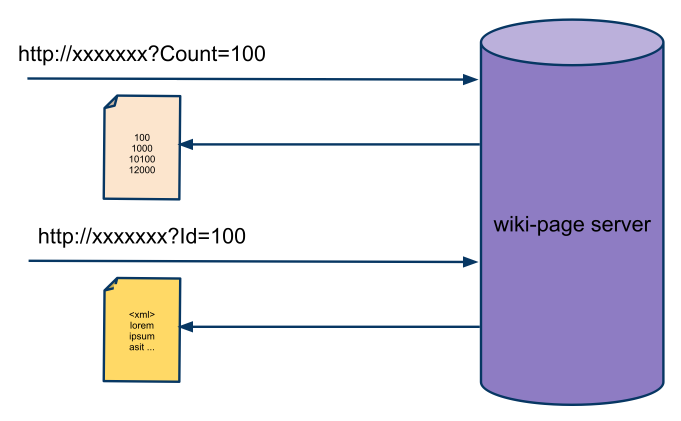

See the Project 2 page for more information on accessing the server of wikipedia pages.

In summary, any computer can issue http requests to the server at the Url associated with the wiki page server and append ?Count=nnnn at the end to get a list of nnnn Ids, or ?Id=nnnn to get the contents of the page with the given Id.

Goal of this Tutorial

Create a Pipeline

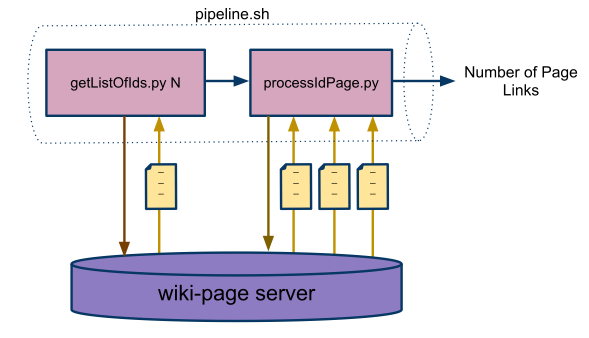

The goal is to create a pipeline of two programs (and possibly other Mac OS X commands) that will retrieve several pages from the wiki-page server and process them. The programs are used in a pipeline fashion, the output of one being fed to the input of the other. A third program, a bash script called pipeline.sh, organizes the pipeline structure.

The figure below illustrates the process.

Submit a Batch of Jobs to the XGrid