CSC352 Homework 4 Solution

--D. Thiebaut 20:24, 25 April 2010 (UTC)

Contents

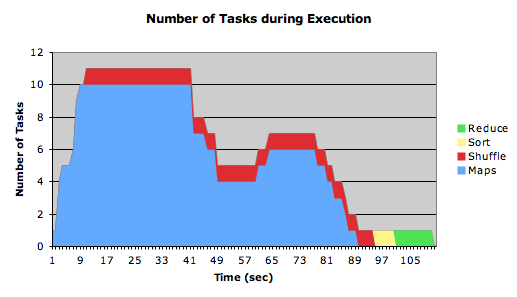

Problem #1: Timeline

Several observations were in order:

- The cluster only has 5 task-tracker nodes

- The default for Hadoop is two map tasks per task-tracker per job.

- This explains the maximum of 10 map tasks running first, then stopping, and then closing down, then 6 map tasks taking over, with an overlap of 4 map tasks.

- 4 files of approximately 180 MB comprise the input. Hadoop splits input files into splits of 64 MB.

- 180 MB will correspond to 3 or 4 splits, depending on the exact size of the file. This means between 12 to 16 splits for the 4 files.

Because we see that 16 (12+4) map tasks run altogether, we deduce that we had 16 splits total, requiring 16 tasks. 12 ran first, then were taken down while 4 more were started.

Problem #2

Below is a Map/Reduce pair of Python streaming programs for a job similar to what was required. Instead of reporting the unique categories, the program return the categories and a list of Ids of wiki pages in which they appear.

Examples of output

category:(10th century in ireland) [10130001]category:(1976 films) [10000001] category:(argentine films) [10000001] category:(economy of côte d'ivoire) [10160001] category:(estuaries in england) [100001,200002] category:(hospitals in north carolina) [1010001] category:(lists of hospitals in the united states) [1010001]

Mapper

hadoop@hadoop1:~/352/dft/hw4$ cat mapper_cat.py

#!/usr/bin/env python

# mapper_cat.py

# D. Thiebaut

#

# parses a wiki page that is in xml format

# and included in <xml> ... </xml> tags.

# Example:

#

#<xml>

#<title>Breydon Water</title>

#<id>100001</id>

#<contributors>

#<contrib>

#<ip>89.240.43.102</ip>

#

#<length>3119</length></contrib>

#</contributors>

#<categories>

#<cat>Estuaries in England</cat>

#<cat>Ramsar sites in England</cat>

#<cat>Royal Society for the Protection of Birds reserves in England</cat>

#<cat>Norfolk Broads</cat>

#<cat>Sites of Special Scientific Interest in Norfolk</cat>

#</categories>

#<pagelinks>

#<page>Arthur Henry Patterson</page>

#<page>Arthur Ransome</page>

#<page>Avocets</page>

#<page>Bewick's Swan</page>

#</pagelinks>

#<text>

#Image. Breydon-north.jpg thumb 250px ... Broads . Category. Sites of Special Scientific Interest in Norfolk . . . .

#</text>

#</xml>

#

# the program outputs the categories

#

# cat 10000001.xml | ./mapper.py

#

#

# 1976 films 10000001

# Argentine films 10000001

# films 4 10000001

# category 3 10000001

# argentine 3 10000001

# 1976 3 10000001

# spanish 2 10000001

# language 2 10000001

# spanishlanguage 1 10000001

# que 1 10000001

# list 1 10000001

#

import sys

def grab( tag, xml ):

"""grabs the text between <tag> and </tag> in the xml text"""

try:

index1 = xml.find( '<%s>' % tag )

index2 = xml.find( '</%s>' % tag, index1 )

return xml[ index1+len( '<%s>' % tag ): index2 ]

except:

return ""

def grabAll( tag, xml ):

"""grabs all the strings between <tag> and </tag> in xml, and

returns them in a list"""

index2 = 0

list = []

while True:

index1 = xml.find( '<%s>' % tag, index2 )

if index1==-1: break

index2 = xml.find( '</%s>' % tag, index1 )

if index2==-1: break

list.append( xml[ index1+len( '<%s>' % tag): index2 ] )

return list

def processInput( debug=False ):

#--- accumulate input lines from stdin ---

xmlText = ""

for line in sys.stdin:

xmlText += line.lower()

#--- grag the Id, and the categories ---

id = grab( "id", xmlText )

categoryList = grabAll( "cat", xmlText )

return ( id, categoryList )

def main( debug=False ):

#--- get page Id, categories (if user wants them), and text ---

id, categoryList = processInput( debug )

for cat in categoryList:

print "%s\t%s" % ( cat, id )

#--- debugging information ---

if debug:

print "-"*60

print "DEBUG"

print "-"*60

print "id = ", id

for cat in categoryList:

print "cat:", cat

print "-"*60

main( False )

Reducer

#!/usr/bin/env python

import sys

#--- get ready to read category, Id pairs ---

lastCategory = None

listOfIds = []

#--- input comes from STDIN ---

for line in sys.stdin:

#--- remove leading and trailing whitespace ---

line = line.strip()

category, Id = line.split('\t', 1)

#--- accumulate Ids for the same category ---

if category==lastCategory:

listOfIds.append( Id )

else:

if lastCategory is not None:

print "category:(%s)\t[%s]" % ( lastCategory, ','.join( listOfIds ) )

lastCategory = category

listOfIds = [ Id ]

#--- write last category processed to stdout ---

if len( listOfIds )!= 0:

print 'category:(%s)\t[%s]' % ( lastCategory, ','.join( listOfIds ) )

Problem #3

Mapper

Reducer